Domenico Viganola

Stockholm School of Economics

Co-Authors: Justin F. Landy, Miaolei (Liam) Jia, Isabel Ding, Domenico Viganola, Warren Tierney, Anna Dreber, Magnus Johannesson, Thomas Pfeiffer, Charles R. Ebersole, Eric L. Uhlmann (main co-authors)

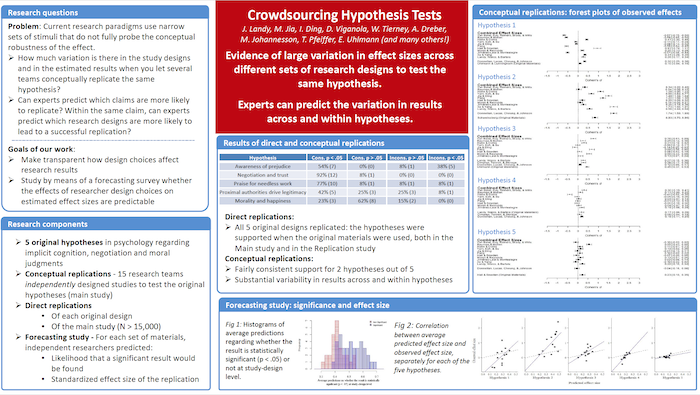

Abstract: Crowdsourcing Hypothesis Tests

To what extent are the results of research studies influenced by subjective decisions that shape research designs? 15 research teams independently designed studies to answer 5 original research questions in psychology. Participants (total N > 15,000) were then randomly assigned to complete one version of each study. Effect sizes varied dramatically across different sets of materials designed to test the same hypothesis: materials from different teams rendered significant effects in opposite directions for four out of five hypotheses. Meta-analysis indicated significant support for 2 out of 5 hypotheses. Overall, none of the variability in effect sizes was attributable to the research teams, while some variability was attributable to the hypothesis being tested. In a forecasting survey, predictions of other scientists were strongly correlated with study results. Crowdsourced testing of research hypotheses helps reveal the true consistency of empirical support for a scientific claim.

Poster: Crowdsourcing Hypothesis Tests