Maya B. Mathur

Stanford University

Co-Authors: Tyler J. Van der Weele

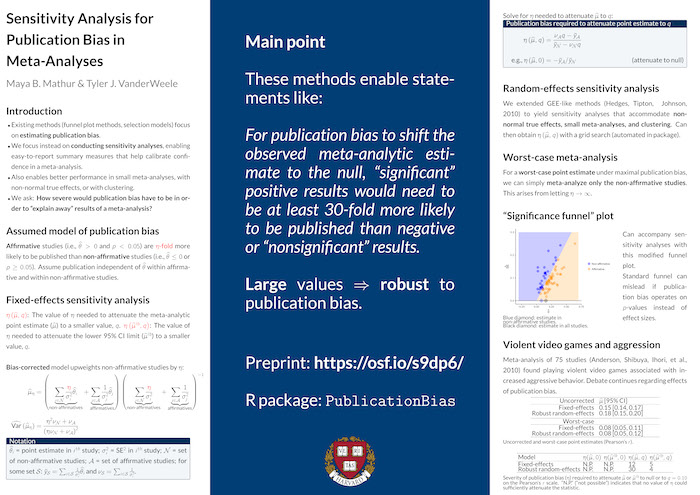

Abstract: Sensitivity analysis for publication bias in meta-analyses

We propose sensitivity analyses for publication bias in meta-analyses. We consider a publication process in which “statistically significant” results are more likely to be published than negative or “nonsignificant” results by an unknown ratio, eta. Our sensitivity analyses enable statements such as: “For publication bias to shift the observed point estimate to the null, ‘significant’ results would need to be at least 30-fold more likely to be published than negative or ‘nonsignificant’ results.” Comparable statements can be made regarding shifting to a chosen non-null value or shifting the confidence interval. To aid interpretation, we empirically estimate publication bias across disciplines. We show that a worst-case analysis under maximal publication bias simply entails meta-analyzing only the negative and “nonsignificant” studies; this method sometimes indicates that no amount of such publication bias could “explain away” the results. We provide an R package, PublicationBias.

Poster: Sensitivity analysis for publication bias in meta-analyses